Machine Learning Engineer Resume Guide

Learn how to write a machine learning engineer resume that proves you shipped models, built reliable data and inference pipelines, ran credible experiments, and drove measurable business results.

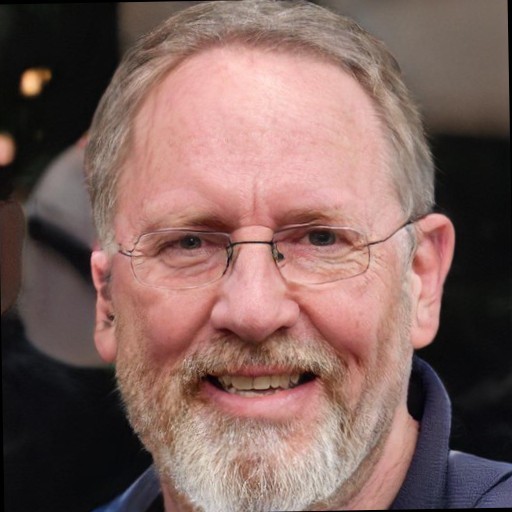

Markus Fink

Senior Technical Recruiter, Ex - Google, Airbnb

What You'll Learn

Target the Right ML Role

A strong machine learning engineer resume starts by matching the actual job. Many AI and ML resumes sound generic because they mix research, modeling, data science, platform, and LLM application work into one undifferentiated story. Pick the role first, then choose bullets that reinforce that target.

ML Engineer

Lead with training pipelines, feature engineering, model serving, monitoring, retraining, and production reliability.

Applied Scientist

Lead with experimentation, model selection, offline and online evaluation, and product metric movement.

Research Scientist

Lead with publications, benchmarks, novel architectures, and technical originality.

AI Engineer

Lead with LLM applications, retrieval, prompt and eval workflows, safety, and system design.

Decision rule

If the job description mentions deployment, latency, pipelines, monitoring, or platform ownership more than papers or benchmarks, your resume should read like an ML engineer resume, not a research summary.

If your resume uses every one of these labels interchangeably, reviewers may assume you do not yet understand where you fit best.

Show Shipped ML Systems

The best machine learning resume examples do not stop at model training. They explain what shipped, how predictions were generated, what data powered the system, how experiments were evaluated, and what changed for the business after launch.

- Data pipelines: describe labeling workflows, feature freshness SLAs, validation checks, backfills, deduping, leakage prevention, or streaming and batch architecture.

- Training and experimentation: show how you compared baselines, selected metrics, tuned models, or decided not to ship a more complex approach.

- Inference and serving: mention online inference, batch scoring, retrieval plus reranking, caching, GPU or CPU tradeoffs, latency budgets, and fallback behavior.

- Monitoring and retraining: include drift checks, model quality dashboards, alerting, retraining triggers, or incident prevention.

- Business integration: connect the system to ranking, recommendations, fraud, search, forecasting, support automation, or other product decisions.

Decision rule

If a bullet could describe a notebook experiment that never launched, add the production detail that proves it shipped: traffic handled, latency target, retraining cadence, failure mode, or downstream metric moved.

Good ML resumes also show tradeoff thinking. Sometimes the strongest story is not a higher offline score. It is that you simplified a fragile data pipeline, reduced inference cost while holding quality steady, or caught model drift before it hurt customers.

Use Metrics That Match Outcomes

Model metrics alone are rarely enough. Accuracy, precision, recall, F1, AUC, BLEU, or win rate only become convincing when they are paired with inference performance, cost, reliability, and business impact.

- Model quality: choose the metric that matches the actual decision problem, not just the easiest benchmark to report.

- Experimentation: show baseline versus candidate model results, A/B test outcomes, or why you kept a simpler model in production.

- Serving performance: include prediction latency, throughput, tail latency, GPU utilization, cache hit rate, or batch runtime.

- Cost: quantify inference spend, training efficiency, annotation cost, or storage and compute reductions.

- Product impact: tie model work to conversion, engagement, fraud captured, support deflection, revenue, or analyst time saved.

- Operational quality: mention drift incidents avoided, retraining stability, fallback success rate, or on-call noise reduced.

Example framing

Better than saying you improved recall by 6%: explain that you increased recall by 6%, held precision flat, and shipped the model into a review queue that recovered $1.2M in annualized fraud losses.

If a metric can be gamed, add context. A model that improved offline precision but hurt customer conversion after launch is not a win. Hiring managers appreciate candidates who understand that.

Choose the Right ML Skills

List the stack that supports your target role, not every ML acronym you have seen online. A focused skills section makes the rest of the resume more believable.

Languages and Data

Python and SQL are table stakes for many roles. Add Spark, Java, Scala, or C++ when they were part of real training, feature, or serving systems.

Frameworks

PyTorch, TensorFlow, JAX, scikit-learn, and Hugging Face matter most when the bullets around them explain what you trained, evaluated, or shipped.

MLOps and Platform

Feature stores, Airflow, Kubeflow, model registries, experiment tracking, orchestration, and cloud tooling are strong signals for ML engineer roles.

Evaluation and Data Quality

If you built offline eval suites, human review workflows, monitoring checks, or dataset validation, include them. These are often stronger signals than another model acronym.

Decision rule

Only list a tool if you could defend it in an interview with a concrete example of where you used it and why it mattered.

Frame Research for Industry

A research-heavy background is not a weakness, but the framing has to match the role. Industry teams usually care less about novelty by itself and more about whether your work shipped, scaled, held up in production, or changed a product decision.

For research roles

Lead with publications, benchmark improvements, novel methods, open-source adoption, and technical depth.

For industry roles

Lead with deployment, inference constraints, experimentation discipline, business impact, cost control, and production system quality.

Rewrite pattern

Instead of saying you proposed a new architecture, say you proposed a new architecture, beat the baseline by 4%, then productionized it into a ranking service handling 2M requests per day with a 70 ms latency target.

A candidate who can bridge both worlds is especially strong. If that is you, show the transition clearly: what you invented, what you shipped, and what changed once real users interacted with the system.

ML Engineer Resume Examples

These machine learning resume examples work because they combine system scope, model context, operational constraints, and measurable outcomes.

Strong

Built a retrieval and reranking pipeline for customer support search, improving successful article resolution by 14% while cutting average inference cost per query by 37% through caching and a smaller cross-encoder.

Strong

Owned the training, feature pipeline, and online serving workflow for a fraud model scoring 9M transactions per day, reducing false positives by 18% without increasing approval latency.

Strong

Designed an experimentation framework for recommendations, replacing ad hoc offline comparisons with holdout evaluation and A/B testing that increased add-to-cart rate by 6.2% on shipped variants.

Strong

First-authored a paper on efficient sequence modeling, then productionized the approach into a candidate-ranking service that cut GPU memory use by 28% and unlocked broader internal experimentation.

Weak

Improved model accuracy using Python and TensorFlow.

Why the weak example fails

It does not say what model you built, what data it used, whether it shipped, how it was evaluated, what constraints mattered, or why the improvement changed anything for the business.